UAT or Usability Testing? Why Not Both?

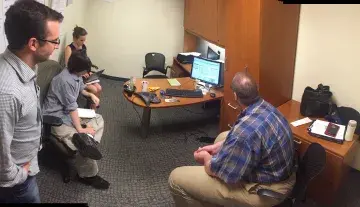

The DHS Customer Experience team sat in on an activity being run by a service team at the U.S. Secret Service. There was a product in development, and someone acting as a user of the product, someone from the development team facilitating the session, and the rest of the development team was listening and watching.

How would you describe that event? Was it User Acceptance Testing (UAT)? Was it a demo? Was it usability testing? Are all of these the same thing?

Similar Ingredients, Different Method, Different Outputs

While it might feel like UAT and usability testing are interchangeable terms or that if you’re doing one, that should suffice for user input, they’re actually different tools for learning about the effectiveness of a digital product. Each method provides value towards delivering effective, human-centered products or services.

User Acceptance Testing (UAT)

UAT is a classic tool that developers use to ensure that what they have created meets the business requirements. It’s a step in the development process to verify/accept the software system before moving the software application to the production environment.

The “user” in this case is the business that defines and approves the requirements and acceptance criteria.

Teams often conduct UAT by creating scripts that business users then follow to test whether the navigation and interactions work the way they were defined in the requirements, and that the appropriate data is collected or appears in the right formats. Many teams conduct UAT just before a release goes live. But a development team might instead combine sprint demos with UAT by having the Subject Matter Expert (SME), who is a business user, walk through the functionality developed during a sprint as if they were an end user to determine if the functionality meets the acceptance criteria. That’s what we saw in the example above with the Secret Service.

Usability Testing

Usability testing (sometimes called usability studies) is a method that development and design teams use to identify where end users encounter confusion or frustration while they use a product to do their own tasks and reach their own goals. Teams use usability testing to evaluate and measure how well the product supports what the user is trying to achieve. This method can (and should be) used throughout the product lifecycle from the earliest prototypes or sketches to higher fidelity or functional prototypes to the current iteration of the product.

Pro Tip: Products can meet every single requirement and meet the criteria for acceptance by the business user, and still fail in production because they aren’t useful or usable for the audiences they’re intended to serve. For example, a button on a website may allow form data to be submitted, but it may be in a location in the user interface that makes it hard for the user to see or click. In this case, it will likely pass UAT, but be flagged as an issue in usability testing.

Usability testing is a great tool for heading off potential support issues. Through this method, you can witness what problems end users experience, firsthand. By watching and listening to end users while they try to accomplish their goals (without training and assisting them), you can explore what the causes of issues are and then use that qualitative data to inform changes to the design that will remedy the frustration or confusion – the kinds of things that typically send end users looking for help or a way to correct mistakes, like calling a help desk or a call center.

Let’s go back to the example with the Secret Service we mentioned at the beginning. The method they were performing was actually a form of UAT. The overarching goal was to ensure that requirements were being met by the product. The agile team, which did not include UX designers, didn’t perform usability testing during their process.

Usability Testing Basics, Step-by-Step

If the team at the Secret Service had performed usability testing, here’s how it would have worked.

- First, they would have chosen points along throughout the development lifecycle to assess usability for this product. Note that you don’t need to have anything coded. You can do usability testing on concepts that are just mock-ups, or paper or clickable prototypes, or products that are in development at any stage. You just need something that an end user can interact with and react to from their lived experience. So, ideally, this is a continuous process of learning and applying insights through iterative changes to design and functionality.

- Then the team would narrow down what they want to learn from their end users, which includes identifying multiple real tasks users would typically do while using the product or service. Usability sessions typically run for around 30 minutes to an hour. (Link to a template for planning a usability test.)

Pro Tip: You can actually use this method on practically anything, from paper forms, to websites, to urban spaces. It doesn’t only have to be digital products or services.

- Next, the team would find actual end users. Since this is an internal tool (and yes, it’s important to test internal-facing services, too) they would find end-users who are Department personnel to participate in the sessions. If this was an externally-facing product or service, they would need to recruit users from the general public – who have done the behavior the design is trying to support. For example, they might be an international traveler filing an I-94, or a disaster survivor filing a claim, or someone applying for immigration status.

At this point, you may be wondering how many people you need to recruit. Ideally, recruiting five or six or a few more people to try out what you’re testing one at a time works well. You don’t need a large number of people for this.

- Finally, for each session they would watch and listen to one end user at a time trying to complete tasks using the application. Again, the tasks chosen for the session should be realistic, actionable and include tasks users would commonly try to accomplish. Within a few individual sessions, you start to see patterns in where end users encounter confusion or frustration. Using that data, the team would be able to address existing usability issues ahead of launch, reducing user frustrations and support calls as a result.